Your employees aren’t struggling to find information because they’re disorganized. They’re struggling because the information lives in dozens of different tools that don’t talk to each other.

The average knowledge worker uses more than a dozen SaaS tools — Slack, Confluence, Jira, Google Drive, Salesforce, ServiceNow, and more — each with its own search bar, none of them connected. When someone needs to find a decision made six months ago, a policy buried in an HR tool, or a customer record in the CRM, they don’t search. They ask a colleague. Or they give up.

That friction has a price. McKinsey found that employees spend nearly 20% of their working week — roughly one full day — just looking for information internally. At average knowledge worker salaries, that’s over $3 million in lost productivity annually for a 1,000-person organization, before you count the decisions made on incomplete information.

AI enterprise search is built to fix this — not by making existing search bars slightly better, but by replacing the whole model. One interface, plain language questions, direct answers drawn from every tool your company uses. No link-wading required.

This guide is for IT leaders, CIOs, and knowledge managers evaluating enterprise search platforms, building the business case, or trying to understand how fast this category has moved. It covers what the technology actually does, how to evaluate vendors, and how to implement without a year-long rollout.

Key Takeaways

- AI enterprise search uses LLMs and semantic retrieval to surface direct, cited answers from across every connected tool — not keyword-matched document links.

- Employees lose roughly 20% of their working week to information-finding. Strong AI enterprise search deployments recover 30–50% of that time.

- Permission-aware retrieval, enforced dynamically at query time, is what makes AI enterprise search safe for sensitive enterprise data.

- The strongest platforms are moving from retrieval to action — AI agents that complete tasks, not just answer questions.

What Is AI Enterprise Search?

Quick Answer: AI enterprise search uses large language models and semantic retrieval to understand the intent behind a query and surface direct answers — not just links — from across an organization’s connected data sources. Unlike traditional keyword search, it interprets meaning, respects permissions dynamically, and synthesizes responses grounded in your company’s actual content.

AI enterprise search is software that lets employees find information — across every tool and data source in an organization — using natural language questions, and returns direct, context-aware answers rather than a ranked list of documents.

It helps to understand this by contrast.

Traditional enterprise search works like a library catalog. It indexes documents, matches keywords, and returns a list of files containing the words you typed. Search for “parental leave policy” and you might get 40 results: the current policy, three previous versions, a Slack thread where someone asked about it, an HR town hall deck, a Confluence page that mentions it in passing. You still have to open each one to find the actual answer.

AI enterprise search reads the policy for you. Ask “how many weeks of parental leave do we get?” and the system reads across your connected sources, finds the most authoritative answer, and responds: “14 weeks for primary caregivers, as documented in [HR Policy v4.2, updated March 2026].” It cites the source. And it respects your permissions — if you can’t access a document, its contents won’t appear in your results.

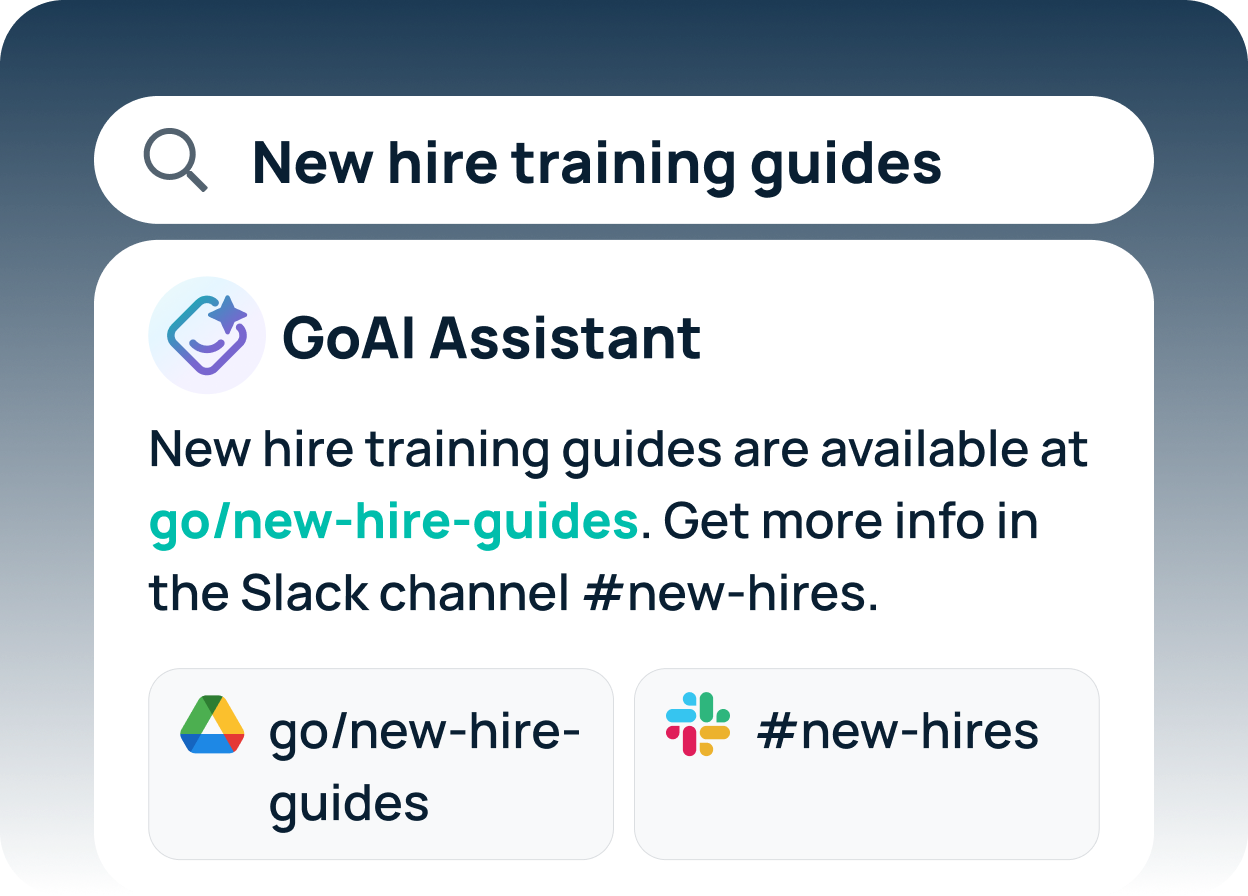

This is what’s often called an internal search assistant — an AI-powered tool that behaves less like a search engine and more like a well-informed colleague: it knows where your company’s knowledge lives, understands the question you’re actually asking, and gives you the answer rather than a pile of documents to sort through. The underlying technology is AI enterprise search; “internal search assistant” is simply how it feels to use it.

The core technologies making this possible

Several technologies have converged to make this possible:

Large language models (LLMs) — the same technology behind ChatGPT — give search systems the ability to understand natural language, not just match keywords. Ask “what did we decide about the vendor contract?” and the system understands that “we” means your organization, “decide” implies a documented past action, and “vendor contract” is a business document — even if those exact words don’t appear together anywhere.

Semantic search and vector embeddings allow the system to find conceptually related content, not just exact matches. A document about “paid time off for new parents” is relevant to a question about “parental leave,” even though the words don’t overlap. Vector embeddings represent meaning as mathematical coordinates, so relevance becomes a calculation rather than a string match.

Retrieval-Augmented Generation (RAG) is the architecture that keeps answers grounded. Rather than generating answers from training data — which would mean hallucinating company-specific facts — RAG first retrieves relevant documents from your internal sources, then uses the LLM to synthesize an answer from that content. The LLM is the reader and writer; your company’s data is the source of truth.

Permission-aware indexing ensures the system respects the access controls already configured in your tools. If an employee can’t access a file in Google Drive, they won’t see its contents in search results. This isn’t optional — it’s table stakes for any enterprise deployment.

Agentic capabilities are the newest layer. Beyond finding and answering, agentic AI can take action — resetting a password, creating a ticket, summarizing a meeting, triggering a workflow — directly from the search interface. This is where enterprise search is heading: from a tool you query to an assistant that helps you act.

What AI enterprise search is not

It’s not a chatbot bolted onto your intranet. Answer quality depends entirely on the underlying data connections — how many sources are indexed, how current the data is, how well permissions are enforced.

It’s not a replacement for your existing tools. Slack, Confluence, and Salesforce don’t go away. AI enterprise search sits on top of them, creating a unified retrieval layer that makes everything you’ve already built more accessible.

And it’s not magic. If your organization’s knowledge is undocumented, outdated, or scattered across sources that can’t be indexed, no search platform will surface it. AI enterprise search amplifies the knowledge you have — it doesn’t create knowledge you don’t.

Why Traditional Enterprise Search Falls Short

Quick Answer: Traditional enterprise search relies on keyword matching within individual tools, which means it misses synonyms, ignores context, and can’t search across systems simultaneously. The result is that employees spend an estimated 20% of their workweek — roughly one full day — searching for information that often exists but simply can’t be found.

Most organizations have tried to solve the information-finding problem at least once. They’ve deployed SharePoint, built a Confluence wiki, set up a shared Google Drive structure. And in most cases, it hasn’t really worked.

Keyword search misses the question being asked

Keyword search returns documents containing your search terms, which is different from answering your question. Type “offboarding checklist” and you’ll get every document that includes those words, including ones where the checklist is buried in paragraph six of a ten-page process guide. You still have to read to find what you need.

It gets worse when terminology differs. Your company’s documentation might say “employee exit process” instead of “offboarding checklist.” Keyword search returns nothing. Semantic search understands that they mean the same thing.

Knowledge is fragmented across too many tools

According to Okta’s 2025 Businesses at Work report, the average company now uses more than 100 SaaS applications — each with its own search bar, none connected to the others. Engineering knowledge lives in Jira comments and GitHub discussions. HR policy is in Confluence. Customer history is in Salesforce. Recent decisions are in Slack. To answer a nuanced question, an employee first needs to know which tool the answer lives in. Most of the time, they don’t.

Maintenance burden falls entirely on IT

Traditional search systems require constant upkeep: tagging documents, building taxonomies, retiring outdated content, managing permissions as teams change. In practice, this work gets deprioritized. Indices go stale. Employees stop trusting results because they keep surfacing old information. Trust erodes, and people fall back on asking colleagues directly — which is expensive and doesn’t scale.

The cost is higher than it appears

When an employee can’t find what they need, one of three things happens: they interrupt a colleague, they search manually and lose their own time, or they make a decision without the full picture. None of these outcomes is free.

McKinsey found that employees spend nearly 20% of their working week just looking for information internally. At average knowledge worker salaries, a 1,000-person organization is losing over $3 million annually to search friction alone — before counting the downstream cost of incomplete information.

47% increase in customer support productivity.

For a support team spending hours each week hunting for answers across disconnected tools, that’s not a marginal gain — it’s the equivalent of nearly doubling the output of your existing team without adding headcount. Model N achieved it after deploying GoSearch — not by restructuring or hiring, but by giving employees faster access to knowledge they already had. Read the case study →

How AI Enterprise Search Works

Quick Answer: AI enterprise search works in seven steps: ingest content from connected tools, build keyword and vector indexes, sync permissions, interpret the natural language query, retrieve relevant content, generate a grounded answer with citations, and (for agentic platforms) execute any follow-on actions. Each step is continuous and automated — not a one-time setup.

Understanding the mechanics helps IT leaders evaluate platforms more clearly and set realistic expectations for deployment.

Step by Step

- Ingestion. The system connects to your existing tools via native connectors or APIs and reads content from each source: documents, messages, tickets, records, wikis, emails, and more. The breadth of your connector library determines the breadth of your search — a platform with deep, maintained connectors for the tools your organization actually uses will outperform one with a longer but shallower list.

- Indexing. Content is processed into two parallel indexes. A keyword index enables fast exact-match retrieval. A vector index stores semantic embeddings of each piece of content, capturing meaning rather than just terms. Most modern platforms build both and use a hybrid approach at query time.

- Permission sync. Alongside content, the system ingests access permissions from each connected source. Who can see which documents, channels, and records is continuously synced — so search results always respect the access controls already in place.

- Query interpretation. When an employee submits a query, the system interprets intent rather than matching keywords — determining what type of answer is needed (a fact, a document, a process, a person) and which sources are most likely to contain it.

- Retrieval. The system searches both indexes, combines results using a relevance ranking model, and filters out any content the querying user isn’t permitted to access.

- Answer generation. RAG synthesizes a direct answer from the retrieved content, with citations back to source documents. The LLM is constrained to that retrieved content — keeping answers grounded in actual company data and dramatically reducing hallucination risk.

- Action. On platforms with agentic capabilities, the answer can trigger follow-on actions — submitting a request, creating a ticket, triggering a workflow — without the employee leaving the search interface.

Freshness, re-indexing, and MCP

Indexing is continuous. New content is indexed as it’s created; updated content re-indexes automatically. Strong platforms update in near-real time, so a policy changed this morning is searchable this afternoon.

The next evolution is live data access via the Model Context Protocol (MCP) — an open standard that allows AI systems to query source tools directly rather than relying on a pre-built index. Where traditional connectors take a snapshot of your data and index it, MCP connectors reach into the source system at query time and return current results. For data that changes frequently — financial records, support tickets, project status, spending data — this is a significant upgrade over even the most aggressively re-indexed snapshot.

GoSearch has released MCP connectors for Intercom, Ramp, GitLab, Lucid, Atlassian Rovo, ThoughtSpot, and Document360 — with more added regularly. As MCP adoption grows across the industry, the line between “search” and “live system access” will continue to blur.

Why permission enforcement matters

Before returning any result, the system checks whether the requesting user has access to that content in the source system. This check must happen dynamically at query time — not from a cached snapshot taken during indexing. Permissions change constantly: people join teams, change roles, get promoted. A system that caches permissions will inevitably surface results people shouldn’t see, or hide results they should.

Where AI Enterprise Search Makes the Biggest Difference

The highest ROI from AI enterprise search comes from deploying it where information-finding friction is most expensive. That varies by team — but these are the departments where organizations consistently see the fastest, most measurable results.

IT: Ticket deflection and faster resolution

IT help desks handle a high volume of repetitive questions: password resets, access requests, software installation guidance, VPN troubleshooting. Most have documented answers. AI enterprise search lets employees self-serve before submitting a ticket — and when a ticket does come in, it helps the analyst find the relevant runbook or precedent case in seconds rather than minutes.

A Forrester Total Economic Impact study found that AI-powered service tools reduced average handling time by 40% — before accounting for tickets deflected entirely through self-service. Organizations that deploy AI enterprise search across their IT function consistently report meaningful reductions in Tier 1 ticket volume, with analysts freed to spend their time on complex infrastructure problems, security incidents, and root-cause analysis rather than answering the same ten questions every week.

HR: Policy lookup and onboarding acceleration

HR teams field the same questions on repeat: how much vacation do I have, what’s the reimbursement limit for home office equipment, how do I update my direct deposit. AI enterprise search handles these directly — surfacing the right policy with the right answer, and freeing HR to focus on work that actually requires a human.

For new hire onboarding, the impact compounds. A new employee can ask “what do I need to set up in my first week?” and get a synthesized, personalized checklist drawn from the onboarding documentation, IT provisioning guides, and team-specific resources. GoSearch customer Model N reported a 47% increase in customer support productivity after deploying AI enterprise search across their support and knowledge teams.

Engineering: Codebase context and documentation access

Engineering teams spend significant time searching for context: understanding how a component was built, finding the relevant Jira ticket, locating documentation for an internal API, figuring out who wrote a piece of code and why. This is especially acute in large codebases or when engineers are new to a project.

AI enterprise search connected to GitHub, Jira, Confluence, and Slack gives engineers a single interface to ask questions like “why was the payment service refactored in Q3?” and get an answer synthesized from the PR description, the Jira ticket, and the Slack thread where the decision was made.

Sales: Competitive intelligence and deal support

Sales teams need fast access to competitive intelligence, case studies, pricing guidance, and product documentation — often mid-call. AI enterprise search lets reps ask “what do customers say about Glean’s pricing?” or “find me a financial services case study for a deal where cost was the deciding factor” and get an immediate answer from the latest sales enablement content — without breaking their flow to hunt across Salesforce, a shared Drive folder, and three different Slack channels.

Marketing: Content retrieval and brand consistency

Marketing teams manage a high volume of assets — briefs, campaign decks, brand guidelines, approved messaging, research reports. AI enterprise search makes it possible to find the right asset quickly and ensures teams are working from current, approved materials rather than old versions surfaced through a manual Drive search. For distributed or high-velocity teams, that consistency compounds over time.

What to Look for in an AI Enterprise Search Platform

Quick Answer: The most important evaluation criteria for AI enterprise search are integration depth, dynamic permission enforcement, answer quality with source citations, security and compliance posture, and realistic time to value. Vendors often look similar on marketing pages — the differences reveal themselves when you test with your actual tools and data.

Evaluation criteria vary by organization, but these are the factors that consistently separate the platforms worth buying from the ones that underdeliver:

- Integration depth, not integration count. Every vendor shows a logo wall of integrations. The relevant question is how deep they are. Does the Confluence connector index page comments, or just titles? Does Salesforce surface opportunity notes, or only account records? Before signing anything, ask vendors to demonstrate search across your specific tools with your specific types of content.

- Permission-aware retrieval at query time. Permissions need to be checked dynamically at query time — not cached at index time. Ask specifically: if I remove someone from a Confluence space today, when does that change take effect in search results? The answer should be “immediately” or “within minutes.” Anything else is a security risk.

- Answer quality and citation grounding. Test with questions where you know the right answer. Then test with questions where the answer is ambiguous or doesn’t exist, and watch whether the system acknowledges uncertainty or confidently fabricates. Strong platforms cite sources and express appropriate uncertainty. Weak ones confabulate — and in an enterprise context, a confident wrong answer is worse than no answer at all.

- Security and compliance posture. Non-negotiable minimums for enterprise deployment: SOC 2 Type II certification, SSO (SAML/OIDC), role-based access controls, data encryption in transit and at rest, and audit logging. For regulated industries, verify GDPR compliance, data residency options, and HIPAA readiness. Also ask: does your LLM provider use our queries to train their models? The answer matters for data governance.

- Agentic capabilities and workflow automation. If your roadmap includes automating workflows — not just answering questions — evaluate agent capabilities now, even if you won’t use them immediately. The gap between retrieval-only and action-capable platforms is growing fast, and you don’t want to migrate again in 18 months. As AI agents become more capable, the question is no longer whether to adopt them — it’s whether your search platform will be ready when you do.

- Time to value and deployment complexity. Ask for a realistic timeline from contract signing to first useful query, and ask what requires engineering involvement versus what works out of the box. Some platforms carry significant infrastructure overhead that doesn’t show up in the licensing conversation — bring-your-own-cloud deployments can generate substantial monthly cloud spend in compute and storage costs before a single license fee is paid. Platforms that require that level of infrastructure investment before returning value are a significant IT burden — and a signal of how complex the ongoing relationship will be.

- Pricing model transparency. Per-seat, per-query, per-document, or flat-rate — the model matters as much as the number. Flat-rate pricing scales better as adoption grows; per-seat models can penalize you for success. Vendors that require lengthy discovery before sharing pricing are typically among the most expensive — and the least likely to be a good long-term partner. Get a fully-loaded estimate before entering procurement.

- Adoption and interface flexibility. The highest-adoption platforms embed into tools employees already use — Slack, Teams, browser extensions — rather than requiring a new destination. If employees have to remember to open a new tab, most won’t. Adoption is the metric that determines whether your investment delivers ROI or sits underused.

AI Enterprise Search vs. Traditional Search: Side-by-Side

| Keyword Search | Semantic Search | AI Enterprise Search | |

| Query type | Exact terms | Natural language | Natural language + intent |

| Result format | Document links | Document links | Direct answers with citations |

| Cross-tool search | Single source | Single source | Multiple sources unified |

| Permission enforcement | Varies | Varies | Dynamic, query-time |

| Context awareness | None | Limited | User role, team, history |

| Agentic capabilities | None | None | Yes (varies by platform) |

Most modern enterprise search platforms don’t force a choice between these approaches — they combine all three. Keyword search for precision, semantic search for relevance, LLMs for answer synthesis. The best platforms apply the right approach for each query automatically, without any configuration required.

The Leading AI Enterprise Search Platforms in 2026

For a deeper look at the full landscape, the Top Enterprise Search Software in 2026 guide covers 15 platforms across features, architecture, pricing models, and deployment complexity. The six platforms below represent the most common choices among enterprise buyers — and the ones where the differences in approach, cost, and capability are most pronounced.

GoSearch

GoSearch is an AI enterprise search and agents platform built for mid-market to enterprise teams. It connects 100+ integrations — Slack, Google Workspace, Confluence, Jira, Salesforce, ServiceNow, and more — through a hybrid indexed and federated architecture that balances speed with data security. The GoAI assistant returns direct, cited answers in natural language. Native go link support and no-code AI workflows for multi-step automation across connected tools are included in the platform.

Best for: Organizations that want fast time-to-value, transparent pricing, and a single platform covering search, AI assistance, and workflow automation — without a lengthy implementation or significant infrastructure overhead.

Glean

Glean uses a knowledge graph to map relationships between people, content, and activity across the organization, with results personalized by team, past behavior, and connections. Its infrastructure requirements and pricing can be significant, particularly in bring-your-own-cloud deployments, and organizations evaluating Glean often find that GoSearch delivers comparable search quality with faster deployment and more predictable costs.

Best for: Large enterprises with complex personalization requirements and the budget for premium deployment. Less suited to teams prioritizing agentic workflow automation, cost predictability, or fast time-to-value.

Moveworks

Moveworks approaches enterprise search from an IT automation angle — it started as an ITSM tool and has expanded into broader knowledge access. Its strength is handling natural language requests for IT and HR tasks: resetting passwords, provisioning access, answering policy questions, with deep ServiceNow integration.

Best for: Organizations primarily focused on IT ticket deflection and HR service automation, where search is an extension of the service desk rather than a standalone knowledge layer.

Microsoft Copilot / Microsoft Search

Microsoft’s search and AI layer provides tight integration across SharePoint, Teams, Outlook, and the M365 suite. It works best when knowledge largely lives in Microsoft tools — cross-ecosystem search into Slack, Salesforce, or Jira requires additional configuration and typically produces thinner results.

Best for: Organizations with M365-centric tech stacks and existing Microsoft enterprise agreements, where the primary use case is surfacing knowledge from within the Microsoft ecosystem.

Coveo

Coveo specializes in embedding AI-powered search inside existing applications — particularly Salesforce, ServiceNow, and commerce platforms. Rather than a standalone interface, it enhances search experiences that already exist within your tools, with particular strength in customer-facing use cases like support portals and e-commerce.

Best for: Organizations needing AI-powered search embedded inside Salesforce, ServiceNow, or customer-facing applications — where search needs to live inside existing workflows.

Elastic Enterprise Search

Elastic is a technically flexible option — built on open-source Elasticsearch, it gives engineering teams full control over the search stack. It’s not a plug-and-play solution; it requires engineering resources to configure, maintain, and build custom search experiences on top of it.

Best for: Organizations with dedicated search engineering teams building custom search architectures — particularly those embedding search into products or applications rather than deploying for internal employee use.

How to Build the Business Case for AI Enterprise Search

Quick Answer: The most compelling business case for AI enterprise search quantifies the cost of information friction in hours and dollars, applies a conservative productivity recovery rate, and compares the result to platform cost. Most organizations find a 3–5x ROI within the first year, before counting secondary benefits like ticket deflection and faster onboarding.

Getting budget approved means translating a productivity problem into financial terms. Here’s a framework that holds up in conversations with CFOs, CIOs, and executive leadership — and a starting point for building the internal business case.

The ROI calculation

- Establish the baseline. McKinsey research puts information-finding at roughly 20% of the working week. Gartner research found employees waste an average of 1.8 hours per day searching for and gathering information. Survey your own employees to confirm — the real number often surprises people.

- Calculate the cost. At average knowledge worker salaries, a 1,000-person organization losing 20% of each employee’s week to search friction is losing over $3 million annually — before accounting for the cascading cost of decisions made on incomplete information.

- Apply a conservative recovery rate. Strong AI enterprise search deployments typically recover 30–50% of time lost to information-finding. Apply a conservative 25–30% to avoid overpromising. At 30%, a $3M problem becomes approximately $900K in recoverable productivity annually.

- Add secondary savings. IT ticket deflection alone adds up quickly: 30% fewer Tier 1 requests on a team handling 500 tickets per month at $20 per ticket is $36K annually in direct savings — plus analyst time freed for higher-value work. For high-growth organizations, faster onboarding is another material line item; IDC estimates lost productivity during new hire ramp costs $10,000–$25,000 per person.

- Compare to platform cost. The math is straightforward: take your conservative productivity recovery figure, add secondary savings from ticket deflection and onboarding, and compare the total to the annual platform cost. For most organizations running this model honestly, the case makes itself.

Metrics to commit to before deployment

Agree on targets before go-live, not after. The metrics that matter most:

- Mean time to answer for common HR and IT questions — establish a baseline before deployment

- Tier 1 IT ticket volume — target 20–40% reduction within 90 days

- Search abandonment rate — how often employees start a search and give up without finding an answer

- New hire time-to-productivity — days until new employees are self-sufficient

- Weekly active users as a percentage of licensed seats — target 60%+ within 60 days

Addressing common objections

Data security and permissions. AI enterprise search doesn’t change what people can see — it makes it faster to find what they’re already allowed to access. The permission model is the same; the retrieval is just faster and smarter. When evaluating platforms, verify SOC 2 Type II certification, data residency options, and whether your LLM provider uses query data for model training.

Adoption. The platforms with the highest adoption rates embed into tools employees already use — Slack, Teams, browser extensions — rather than requiring a separate destination. Where you deploy the interface is as important as which platform you choose.

“We already have SharePoint / Confluence.” These tools solve document storage, not knowledge retrieval. AI enterprise search doesn’t replace your wikis — it makes them accessible to the people who don’t know where to look.

Documentation quality. A baseline is needed, but it doesn’t need to be perfect before deployment. Deploying search tends to surface where knowledge gaps exist, which often becomes the forcing function for a documentation improvement program — not a reason to delay.

From Contract to First Query: What a Successful Deployment Looks Like

Phase 1: Core connections

Start with the three to five tools where the most knowledge lives and search friction is highest. For most organizations that means Google Drive or SharePoint, Slack or Teams, Confluence or Notion, and Jira or ServiceNow. Most modern platforms have prebuilt connectors for these tools that can be authorized and configured by an admin — no engineering required. Get permission syncing in place and run test queries to validate answer quality before any users see it.

Phase 2: Pilot with a target team

Pick the team where ROI is clearest — typically IT, HR, or engineering — and deploy there first. Collect feedback on answer quality, missing connectors, and user experience. Identify the highest-value workflows to automate. Measure the metrics you committed to at the start of the pilot. Even early data showing ticket deflection or time savings builds the case for broader rollout and keeps executive sponsors engaged.

Phase 3: Broader rollout

Expand to additional teams, connect additional data sources, and begin deploying AI agents for the automation use cases identified in the pilot. The focus shifts from configuration to adoption — promote the tool in Slack or Teams, share concrete wins from the pilot team, and make the interface available wherever employees already work.

Change management matters more than technology

The most common reason deployments underperform isn’t the technology — it’s adoption. The tactics that work: showing real example queries rather than feature descriptions, internal champions who can demonstrate the tool in team meetings, and a feedback channel where employees can flag wrong or missing answers. Wrong answers should be treated as signal, not failure — they reveal gaps in underlying knowledge that are worth fixing regardless of the platform.

From Productivity Tool to AI Infrastructure: What Comes Next

The shift from retrieval to action

The clearest direction in the market is the move from answering questions to completing tasks. Answering “what’s the process for requesting a software license?” is useful. Handling the request — submitting the form, routing for approval, notifying the requestor — is transformative. Agentic AI is making this possible, and the platforms building credible agent capabilities now will be significantly harder to displace than those that remain retrieval-only.

MCP and real-time, live-data connectors

The Model Context Protocol (MCP) is an open standard that allows AI systems to connect to data sources in real time rather than relying on pre-indexed snapshots. For enterprise search, the difference is meaningful — answers reflect the current state of your systems, not an index that’s hours or days old. As MCP adoption grows across the industry, the line between “search” and “live system access” will increasingly blur.

Gartner’s market validation

Gartner’s 2025–2026 Market Guide for Enterprise AI Search marks a category inflection point. Its core finding — that enterprise search has become AI infrastructure, not just a productivity tool — reflects where leading organizations are already heading. For IT leaders building internal business cases, it’s a useful data point: this isn’t a speculative investment, it’s one with analyst-level validation behind it.

Multimodal search

Today’s AI enterprise search is primarily text-based. The next wave handles images, diagrams, audio transcripts, and video as first-class search objects. For organizations with significant non-text knowledge — engineering diagrams, recorded customer calls, training videos, scanned documents — multimodal search will dramatically expand the percentage of organizational knowledge that’s actually findable.

Where to Go From Here

AI enterprise search is not a future technology. It’s solving a real and quantifiable problem right now — and the gap between organizations that have deployed it and those that haven’t is growing every quarter.

The organizations seeing the strongest results share a few things in common: they started with a specific, high-value use case rather than trying to solve everything at once. They chose a platform with deep integrations into the tools their employees already use. They treated adoption as an active project, not an outcome. And they measured what matters — time saved, tickets deflected, answers found — rather than vanity metrics.

The path forward is straightforward:

- Identify your highest-cost knowledge friction. Where does your team lose the most time searching, waiting, or re-answering the same questions?

- Evaluate platforms against your actual tools and data. A real-environment test will tell you more than any scripted demo.

- Build the business case in time and dollars. Run the numbers — most organizations find the ROI case makes itself.

- Start small, measure early, and expand. A proof-of-concept or small-team rollout will surface the real gaps, integrations, and edge cases that no sales presentation ever will.

GoSearch is an AI-powered enterprise search and agents platform that connects to 100+ workplace tools — giving your team one intelligent interface to find answers and get work done. Get started free to see it in action.

Personal access to agentic enterprise search, free with GoSearch.

Sign up

Frequently Asked Questions: AI Enterprise Search

Traditional enterprise search uses keyword matching within individual tools and returns a list of document links. AI enterprise search uses large language models and semantic retrieval to interpret the intent behind a query and surface direct, cited answers from across all connected data sources simultaneously. The practical difference: traditional search tells you where to look; AI enterprise search tells you the answer.

Most modern platforms can complete initial deployment — connecting core tools and running useful queries — within one to two weeks. A full rollout typically takes four to twelve weeks, depending on the number of integrations, permission complexity, and change management involved. Platforms requiring custom connector development or professional services engagements can take longer.

Yes, when deployed correctly. The key mechanism is permission-aware retrieval: the system only surfaces content to users already authorized to access it in the source system. Enterprise-grade platforms are SOC 2 Type II certified, support SSO and role-based access controls, encrypt data in transit and at rest, and provide full audit logs — but always ask your vendor whether query data is used to train LLM models.

At minimum, it needs to connect to wherever your organization’s most important knowledge lives — typically a document storage system (Google Drive or SharePoint), a messaging platform (Slack or Teams), a wiki (Confluence or Notion), and a ticketing system (Jira or ServiceNow). Integrations with Salesforce, Workday, Gmail, and Outlook expand coverage significantly. The most effective deployments connect 8–12 core tools within the first 90 days.

General-purpose AI tools like ChatGPT or Gemini generate answers from their training data — they have no access to your company’s internal documents, tools, or data. AI enterprise search retrieves from your organization’s actual knowledge and uses the LLM to synthesize answers from that content. Permission enforcement, source citation, and grounding in real internal data are what make enterprise search safe and useful where a general-purpose AI tool isn’t.

AI enterprise search surfaces what exists — it doesn’t improve content quality on its own. Deploying search often becomes the forcing function for documentation improvements: employees quickly identify where answers are missing or conflicting, and search analytics surface the most common unanswered queries. Most organizations find deployment accelerates a documentation cleanup rather than waiting for one.