There is a pattern that keeps appearing in enterprise AI deployments. An organization invests in an AI agent or AI assistant. Early demos go well. Pilot results look promising. Then the rollout begins — and performance collapses.

Answers become unreliable. Users stop trusting the system. Adoption stalls.

The diagnosis is almost always the same: not the model, not the agent architecture — the knowledge layer. Specifically, AI agents underperform due to failures in RAG retrieval, not in generation.

Gartner’s Market Guide for Enterprise AI Search provides data to back up what practitioners have been observing firsthand. The report identifies the core issue directly:

“Current RAG-based AI assistants and agents often underperform when scaled across diverse enterprise information, primarily due to issues with data source quality, and retrieval relevancy mechanisms.”

— Gartner, Market Guide for Enterprise AI Search, September 2025

This isn’t a fringe finding. It’s a pattern Gartner identified across organizations in North America, Europe, and Asia-Pacific. And it has a direct solution: investing in enterprise AI search as the foundational infrastructure that makes AI agents reliable.

What Is RAG and Why Does Retrieval Failure Matter?

Retrieval-augmented generation (RAG) is an architectural pattern that grounds AI outputs in real, current enterprise data rather than relying solely on a model’s training data. Before generating a response, the system retrieves relevant context from enterprise knowledge sources and includes that context in the prompt.

The consequence of this architecture is direct:

- If RAG retrieval is accurate and comprehensive, the AI produces reliable, grounded answers.

- If RAG retrieval is incomplete, stale, or mismatched to the query, the AI produces confident-sounding responses that are wrong.

This is why most enterprise AI agents underperform at scale. The model is not the bottleneck — the retrieval layer is. Gartner identifies two specific failure modes: data source quality issues and retrieval relevancy gaps. Neither is solved by switching LLM providers. Both are solved by building a proper enterprise search infrastructure underneath your AI.

The Root Cause: Why AI Agents Underperform RAG Retrieval at Scale

1. Fragmented enterprise knowledge

The phrase “diverse enterprise information” in Gartner’s finding describes a reality every IT leader knows well: organizational knowledge is scattered across dozens of systems that were never designed to interoperate.

A typical mid-to-large enterprise knowledge environment includes:

- Documents and files across Google Workspace or Microsoft 365

- Conversations and institutional context in Slack or Teams

- Project tracking data in Jira or Asana

- Process and technical documentation in Confluence or Notion

- Customer history in Salesforce or HubSpot

- Support resolutions in Zendesk or ServiceNow

- HR policies in Workday or BambooHR

- Code repositories and incident history in GitHub or GitLab

Each system maintains its own data model, access controls, search index, and definition of “relevant.” An AI agent that can access only one or two of these systems operates with a partial and distorted picture of organizational knowledge.

Gartner’s 2024 Digital Worker Survey found that 34% of employees have difficulty finding information — and that among those using AI tools specifically to find data, 36% still struggle to access what they need. The AI did not solve the fragmentation problem. It inherited it.

2. Retrieval relevancy gaps

Even when data sources are connected, RAG pipelines often fail due to relevance issues. Traditional keyword-based search cannot surface documents that are semantically related but use different terminology. An AI agent querying for “headcount reduction strategy” may miss a critical document titled “workforce realignment framework” because the literal words do not match.

This is a retrieval failure — and it directly causes AI agent underperformance, regardless of the underlying model’s capabilities.

3. Stale and low-quality data (ROT content)

Gartner introduces a critical concept for understanding why AI agents underperform in RAG systems: ROT content — Redundant, Obsolete, and Trivial information. When RAG pipelines retrieve ROT content, AI agents generate responses grounded in outdated or irrelevant data.

The inverse standard Gartner recommends is APT content: Accurate, Pertinent, and Trusted. Gartner clearly states that even the most advanced AI cannot deliver valuable results from poor data.

Five Capabilities That Prevent AI Agent RAG Underperformance

Based on Gartner’s 2025 analysis of mandatory and common features in enterprise AI search platforms, five capabilities determine whether the RAG knowledge layer can support reliable AI agent performance at scale.

1. Comprehensive connector coverage

Gartner identifies connectors as a mandatory feature, not an optional one. Connectors integrate enterprise search tools with structured and unstructured data sources across file systems, document management systems, email, databases, and line-of-business applications. Leading vendors offer prebuilt connectors numbering in the hundreds.

An AI agent is limited by what the search layer can access. Coverage gaps are retrieval gaps. Retrieval gaps are performance gaps.

2. Permission-aware security at query time

Security and access control at the retrieval layer is both a compliance requirement and a functional one. An AI agent that retrieves documents beyond a user’s permission level produces answers that cannot be acted on — and creates legal and compliance exposure.

Security trimming must be enforced at the RAG retrieval layer, not applied afterward. This is a frequent source of enterprise AI agent failure in production deployments.

3. Hybrid search: keyword plus semantic retrieval

Pure keyword search cannot support enterprise AI workflows. Gartner describes hybrid search — combining traditional lexical search with advanced vectorization and semantic similarity techniques — as the standard for enterprise AI search.

The practical impact: AI agents retrieve context that is semantically relevant even when the exact keywords do not match. This directly addresses one of the two core RAG retrieval failure modes Gartner identifies.

4. Real-time or near-real-time indexing

Enterprise information changes constantly. A knowledge base synchronized overnight can be 24 hours stale by the time an agent queries it. For use cases like pricing, compliance, HR policy, or incident response — where accuracy is time-sensitive — stale RAG retrieval produces answers that are wrong by definition.

Real-time indexing is a prerequisite for reliable AI agent performance in dynamic enterprise environments.

5. Information governance and content quality management

This is the capability most commonly overlooked in AI search deployments — and the one that causes the most downstream damage.

Gartner’s governance recommendations for enterprise AI search are concrete:

- Set clear policies for content quality and implement output monitoring for reliability and compliance

- Implement data cleansing programs to reduce ROT content and increase the proportion of APT information

- Apply comprehensive metadata enrichment to improve retrieval accuracy

- Build metrics that evaluate search data quality as part of a broader knowledge management program

Organizations that skip governance work to accelerate deployment consistently find that AI agent performance degrades over time as ROT content accumulates in the RAG pipeline.

AI Assistants vs. AI Agents: Why RAG Retrieval Stakes Are Higher for Agents

Gartner draws a sharp distinction between AI assistants and AI agents — one that reframes RAG underperformance from a technical inconvenience into an operational risk.

AI assistants simplify tasks and interactions but depend on human input and do not operate independently. A partially correct answer from an AI assistant can be caught and corrected by the human who reads it.

AI agents can operate and perform complex, end-to-end tasks without constant human oversight. An AI agent that acts on a partially correct RAG retrieval result — updating a record, sending a communication, escalating a ticket — produces an error that propagates through a workflow before anyone notices.

This is why Gartner states that the effectiveness of AI assistants and agents hinges on grounding LLMs in accurate, pertinent, and trusted content. The higher the agent’s autonomy, the higher the stakes for the retrieval layer. Organizations building toward multi-agent ecosystems operating across platforms need knowledge infrastructure that matches the ambition.

“Gartner’s 2025 Market Guide concludes that enterprise AI search has become indispensable infrastructure — enabling both AI assistants and agents to synthesize information for human and automated consumption, and driving automation of complex business tasks.”

— Gartner, Market Guide for Enterprise AI Search, September 2025

How to Fix AI Agent RAG Underperformance: The Enterprise Search Solution

Gartner’s solution is clear: invest in enterprise AI search as the foundational infrastructure that makes AI agents reliable. This means implementing a federated search architecture that retrieves and synthesizes information across all connected systems in a unified query.

Gartner explicitly notes that federated search tools are seeing renewed interest from buyers as standards like the Model Context Protocol (MCP) make federation easier — the infrastructure for this is maturing rapidly.

Implementation checklist for reducing AI agent RAG underperformance:

- Audit your data sources — identify every system containing knowledge your AI agents need to access

- Deploy comprehensive connectors — connect all relevant systems to a unified search layer

- Implement hybrid search — combine semantic and keyword retrieval to close relevancy gaps

- Enforce permission-aware retrieval — apply security trimming at the RAG layer

- Enable real-time indexing — prevent stale data from entering the RAG pipeline

- Execute a content governance program — reduce ROT content, increase APT content before scaling agent deployments

The organizations that get AI agents right in 2026 will not just be the ones that chose the right model. They will be the ones that fixed the knowledge layer first.

Stop debugging your AI agents. Fix the knowledge layer.

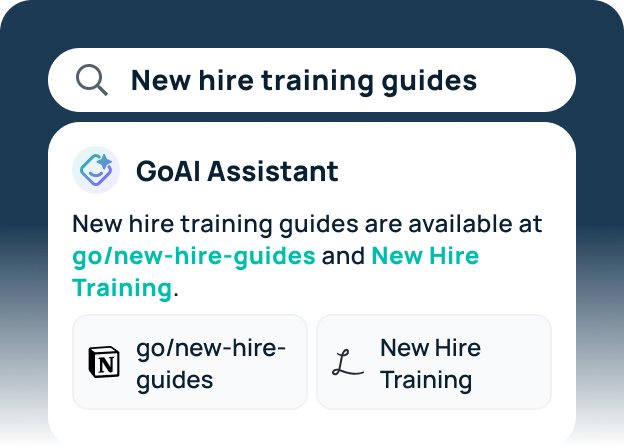

GoSearch connects 100+ enterprise applications into a unified, permission-aware search layer — with hybrid retrieval, continuous indexing, and content analytics built in. Everything on the Gartner framework. Nothing to build from scratch. See GoSearch in action →

Search across all your apps for instant AI answers with GoSearch

Schedule a demo

Frequently Asked Questions: AI Agents, RAG Retrieval Failures, and How to Fix Them

AI agents underperform in enterprise environments primarily because of RAG retrieval failures, not model limitations. According to Gartner’s 2025 Market Guide for Enterprise AI Search, RAG-based AI assistants and agents often underperform when scaled across diverse enterprise information due to data source quality issues and retrieval relevancy gaps. The knowledge layer — not the generation model — is the bottleneck.

RAG retrieval failure in enterprise AI is caused by three main factors: fragmented data sources that prevent comprehensive retrieval, retrieval relevancy gaps caused by keyword-only search that misses semantically related content, and low-quality or outdated data (ROT content — Redundant, Obsolete, Trivial) that pollutes the context passed to the AI model.

RAG retrieval directly determines AI agent accuracy because agents generate responses based entirely on what they retrieve. If retrieval is accurate and current, the agent produces reliable outputs. If retrieval is incomplete, stale, or irrelevant, the agent produces confident-sounding responses grounded in wrong or missing information. In agentic workflows, these errors propagate through automated processes before humans can intervene.

AI assistants require accurate RAG retrieval to produce helpful responses, but humans can catch and correct errors before acting on them. AI agents require higher RAG retrieval quality because they act autonomously on what they retrieve — updating records, sending communications, or escalating tickets — meaning retrieval errors propagate through workflows automatically. The higher the agent autonomy, the greater the impact of RAG retrieval failure.

APT content — Accurate, Pertinent, and Trusted — is Gartner’s quality standard for content that supports reliable AI agent outputs. The inverse concept, ROT content (Redundant, Obsolete, Trivial), describes content that actively degrades RAG performance by providing AI agents with outdated or irrelevant context. Organizations must actively manage content quality to reduce ROT and increase APT content before scaling RAG-based AI agent deployments.

Federated search is an architecture that retrieves and synthesizes information across all connected enterprise systems in a unified query, rather than querying each system separately. It prevents AI agent RAG underperformance by ensuring the retrieval layer has comprehensive access to all relevant knowledge sources — eliminating coverage gaps that cause agents to generate responses based on partial or missing information.

Source: Gartner, “Market Guide for Enterprise AI Search,” September 2025. Gartner does not endorse any vendor, product, or service depicted in its research publications.