Quick Summary

- AI is powerful in enterprise search, but it has clear limitations.

- Issues like hallucinations, outdated data, and lack of context can lead to inaccurate results.

- Human oversight and strong data hygiene dramatically improve accuracy.

- The best results come from a hybrid human + AI approach.

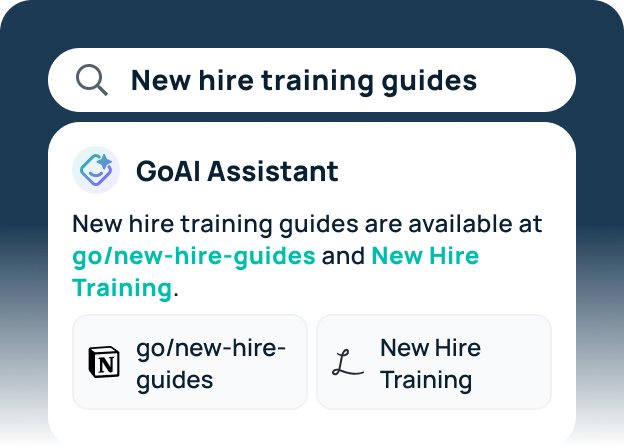

- Platforms like GoSearch pair agentic AI with permission-aware context and governance to mitigate risks.

AI-powered enterprise search has taken a massive leap forward. Teams expect answers instantly, agents that can take action, and intelligent search that understands their company’s language, structure, and workflows.

AI can dramatically reduce time spent searching, researching, and routing work. But AI isn’t magic. And when organizations aren’t familiar with its limitations, the experience can quickly become frustrating or even risky.

Understanding AI limitations in enterprise search helps teams set better expectations, avoid common pitfalls, and unlock far more value from the tools they adopt. Here’s how AI really works behind the scenes, where it falls short, and how to get the most out of it.

What AI Does Well in Enterprise Search

Despite its flaws, AI is exceptionally good at tasks historically painful for knowledge workers:

1. Processing massive amounts of data

No human can skim 40-page PDFs, Slack threads, Jira tickets, and wikis in seconds. AI can, and it makes that information discoverable.

2. Summarization and extraction

AI excels at condensing long documents, surfacing key points, and pulling structured info (like timelines or tasks) out of messy data.

3. Connecting information across apps

In modern orgs, knowledge is scattered. AI agents help unify it: searching Confluence and Slack, referencing Jira tickets, summarizing code changes, all in a single query.

4. Handling repetitive tasks

Routing a request, pulling recent updates, generating first-draft answers: these workflows are perfect for AI.

But the strengths only tell half the story.

Key AI Limitations in Enterprise Search

Even the best models share several fundamental limitations. These aren’t flaws of a specific tool. They’re inherent properties of large language models.

1. “Garbage in, garbage out” data dependency

AI relies entirely on the quality, freshness, and organization of your content. If your documents are outdated or conflicting, AI will surface that confusion back to users.

2. AI hallucinations still happen

Generative AI can produce answers that sound plausible but aren’t backed by real data. Without guardrails, this becomes a major trust issue.

3. Limited understanding of organizational nuance

AI doesn’t know which team owns a process, why a decision was made three quarters ago, or which runbook is canonical. Without context, AI sometimes picks the wrong source.

4. Generic, pattern-based outputs

AI often defaults to safe, repetitive answers. For nuanced internal situations, this lack of specificity can frustrate users.

5. Opaque reasoning and traceability

AI tools that don’t cite sources make it hard to validate accuracy, especially in industries that require auditability.

6. Over-reliance erodes human oversight

If teams assume AI is always right, internal knowledge quality degrades and mistakes become harder to catch. AI should accelerate decisions, not replace judgment.

AI Strengths vs. AI Limitations and How GoSearch Helps

| AI Strengths | AI Limitations | How GoSearch Mitigates |

| Fast summarization and search | Can hallucinate false answers | Citations and permission-aware context reduce hallucination risk |

| Handles large data volumes | Data quality issues impact output | Verification and deprecation of content, proprietary ranking algorithm |

| Executes repetitive tasks | Lacks human nuance and judgment | Agents operate with guardrails and human-in-the-loop workflows |

| Connects info across apps | Limited understanding of org culture | Unified context and source ranking prioritize authoritative content |

| Always available | No accountability or intent | Auditable logs, governance rules, and security modeling |

Best Practices to Get the Most Out of Enterprise AI

Whether you’re evaluating tools or rolling out GoSearch internally, these principles help ensure accurate, trusted, reliable results.

1. Treat AI as an assistant, not a decision-maker

AI should speed up work but humans should still make the final calls.

2. Invest in data hygiene

Archive outdated content. Merge duplicates. Update key runbooks. This alone can improve AI accuracy dramatically.

3. Set realistic expectations internally

Share clear examples of what AI does well and where it struggles. That transparency builds trust and adoption.

4. Create feedback loops

Let employees report incorrect answers or outdated sources. Use that feedback to continually improve content quality.

5. Complement AI with human knowledge infrastructure

Maintain human-curated documentation and expert directories. AI is a multiplier, not a replacement.

Human + AI: Why the Hybrid Approach Wins

The best-performing organizations don’t chase “full automation.” They combine the strengths of:

- AI → speed, scalability, and cross-system search

- Humans → judgment, nuance, context, collaboration

This hybrid model reduces risk and increases accuracy. That’s why it’s the philosophy behind GoSearch’s approach to enterprise AI.

AI agents automate work, but with permission-aware access, contextual grounding, and source citations so users always understand what the AI is basing its answers on.

AI Is Powerful When Used Responsibly

AI limitations in enterprise search don’t make AI less valuable. They simply highlight the need for context, governance, and realistic expectations.

If you acknowledge these limitations upfront and design around them, AI becomes a trusted partner that accelerates work and amplifies human expertise.

Want to see how GoSearch uses agentic AI with proper guardrails and permission-aware context?

Book a demo and explore what responsible enterprise AI looks like.

FAQ: AI Limitations in Enterprise Search

1. Why does AI hallucinate?

Because generative AI predicts text based on patterns, not always verified facts. Without citations or permission-aware grounding, it can invent answers.

2. What are the biggest AI limitations in enterprise search?

The top challenges include hallucinations, outdated or conflicting content, lack of context, and limited understanding of complex business logic.

3. How can businesses reduce AI inaccuracies?

Improve content quality, adopt tools with strong governance, keep humans in the loop, and use platforms that cite sources (like GoSearch).

4. Is agentic AI safe for enterprise workflows?

Yes — if agents operate with guardrails, permissions, and full auditability. Without those controls, agentic systems can overreach or act incorrectly.

5. How do you keep AI search accurate over time?

Maintain data hygiene, monitor engagement analytics, set governance rules, and continuously refine or re-rank authoritative sources.

Search across all your apps for instant AI answers with GoSearch

Schedule a demo